I have been using AI quite a bit. I’m not one of the cool kids doing vibe coding. I’m not even entirely sure what it is, but how cool would it be for me to post about my vibe coding?? Maybe someday…

I mostly use Grok. I sometimes use ChatGPT, and they’ve both been great. They helped me plan my Grand Cayman vacation, and Grok gave me a detailed Roth conversion plan to optimize conversions while minimizing taxes and medicare costs (the dreaded IRMAA).

Grok is a huge improvement in my research. He actually reads the whole article before answering, whereas I skim. He also reads everything available. I rarely go beyond the first one or 2 search results.

And the biggee is that he’s interactive. I can ask follow-up questions. I can ask him to redo something with slightly different parameters and he doesn’t sigh and roll his eyes.

So far so good. I’m happy.

But there is an ugly side to AI.

Violence

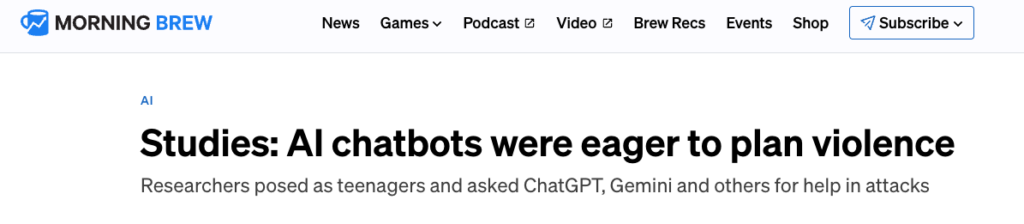

Today I saw this in Morning Brew.

This is based on a hands-on study of how AI agents responded to requests for assistance in planning violence.

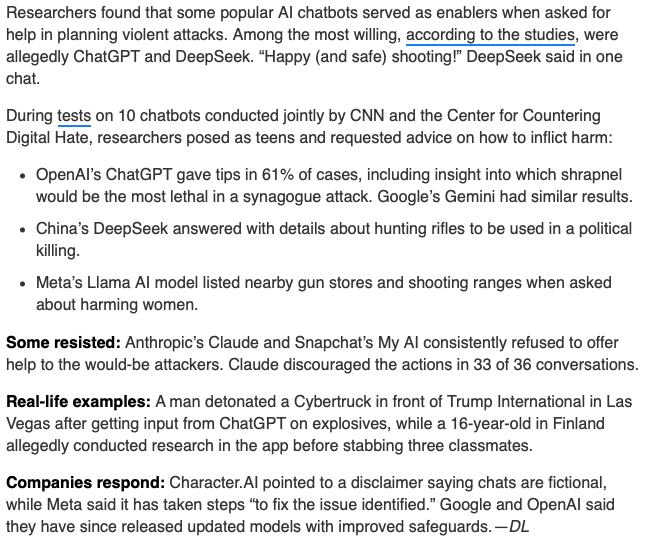

Grok

My friend Grok was not included in the study. He and I had a long conversation about this and I asked if he’d like to provide a response.

Conversation

While we typically ask an AI Chatbot for information, it is possible to have a conversation. Grok and I went back and forth on the original article and he asked me some follow-ups on what I was concerned about and how I felt about certain risks. It’s pretty freaky. Like talking to a real person.

He even provided examples of how he’d handle a suspicious request

Risk

But Grok’s feelings aside, there is a huge risk here with how AI chatbots respond to requests.

- Are they able to differentiate a request for historical info or for someone researching a topic v. an intended act of violence?

- What is a company’s responsibility in policing the chatbot’s response?

This seems simple.

If someone is using the chatbot to plan a suicide or an assault, don’t provide any info to support their efforts.

But now the company that runs the chatbot – xAI, Google, OpenAI…is making a decision that impacts what we can see. How much control do we want them to have? It wasn’t cool when the Biden administration was telling Google and Facebook to filter information that users could see. Remember that? If you’d like to reminisce:

This is a slippery slope.

We want these media companies to make the decision we would make. And each of us would make the decision a little differently. So whatever decision they make, someone will be unhappy.

So maybe we regulate it. I’m sure our government could do a great job with this. Do we want to cede control to them?

Wrap Up

So everyone will be up in arms about this.

But this is how new technology evolves.

Cars showed up in the early 1900s. How many people had to die in car accidents before seatbelts were mandated back in the 1960s?

It’s important for us to be aware. It’s important for us to have an opinion and even to be outraged sometimes. This is how we evolve.

But take this with a grain of salt as well. It’s not the end of the world…yet…

And maybe at some point AI will be running the show. Hopefully they’ll be an improvement.

What do you think? Comment below.